Where is the total number of variables, and is the number of variables selected randomly for a particular node split. Usually, the best practice is to set it to In the popular machine learning library called sci-kit-learn, this hyperparameter of random forest is known as “max_features”. In Random forest, we apply the same general bagging technique using Decision Trees as the weak learners, along with one extra modification. When we want to split the node of a decision tree, it considers only a subset of randomly selected variables (features). It means that we can select one sample or value multiple times.įinally, to generalize the procedure, random forest limits the number of variables used for constructing decision trees.

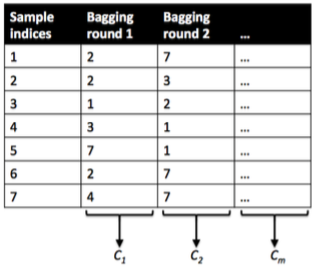

Comparison with Decision Trees and Bagging. Random forest is a way of averaging multiple deep decision trees, trained on different parts of the same training set, with the goal of overcoming over-fitting. Also, this method samples subsets randomly with the possibility of replacement. You can see Random Forest as bagging of decision trees with the modification of selecting a random subset of features at each split. In short, it takes the original data set and creates a subset for each decision tree. Examples: AdaBoost, Gradient Tree Boosting, 1.11.1. The motivation is to combine several weak models to produce a powerful ensemble. The idea of random forests is to randomly select \(m\) out of \(p\) predictors as candidate variables for each split in each tree. This is a statistical method that we can use to reduce the variance of machine learning algorithms. Examples: Bagging methods, Forests of randomized trees, By contrast, in boosting methods, base estimators are built sequentially and one tries to reduce the bias of the combined estimator. Random forest is an extension of Bagging, but it makes significant improvement in terms of prediction. Random forest utilizes another compelling technique called bootstrapping.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed